mallika parulekar

I'm a master's student in Computer Science at Stanford University, specializing in Artificial Intelligence. I'm broadly interested in applying machine learning to high-impact problems in learning and robotics.

Most recently, I worked on building LLM-based title generation for Docs at Google and on improving content demand modeling at Netflix.

I completed my undergraduate degree in Computer Science from UC Berkeley. I was a research assistant at BAIR in Professor Goldberg's AUTOLab, where I worked on robot grasping and cable manipulation using analytic theory and deep learning. While at Berkeley, I interned at Meta on recommendation systems, at Caro on full-stack development, and at Abacus.AI on anomaly detection.

I love teaching, and was a TA for Reinforcement Learning in Winter 2026 and previously for Discrete Math at Berkeley :)

In my free time, I enjoy playing ultimate frisbee with Stanford Women's Ultimate and am currently a co-captain of the B team, Firefly.

Research

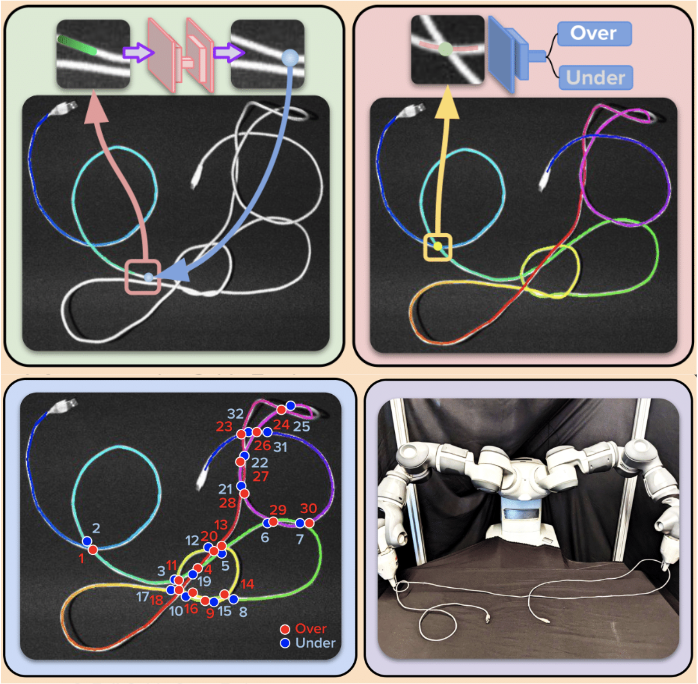

HANDLOOM: Learned Tracing of One-Dimensional Objects for Inspection and Manipulation

Vainavi Viswanath*, Kaushik Shivakumar*, Mallika Parulekar+, Jainil Ajmera+, Justin Kerr, Jeffrey Ichnowski, Richard Cheng, Thomas Kollar, Ken Goldberg

Conference on Robot Learning (CoRL), 2023. Oral Presentation.

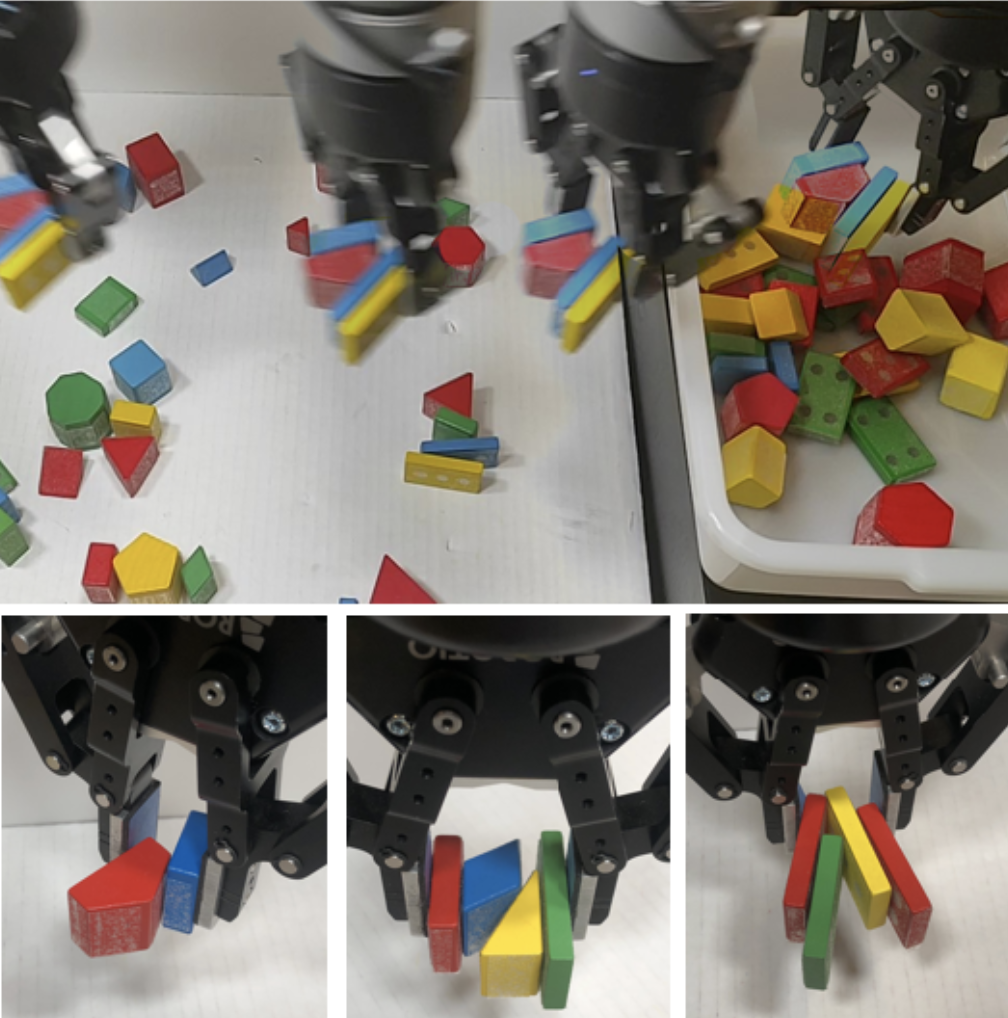

Learning to Efficiently Plan Robust Frictional Multi-Object Grasps

Wisdom C. Agboh*, Satvik Sharma*, Kishore Srinivas, Mallika Parulekar, Gaurav Datta, Tianshuang Qiu, Jeffrey Ichnowski, Eugen Solowjow, Mehmet Dogar, Ken Goldberg

International Conference on Intelligent Robots and Systems (IROS), 2023.

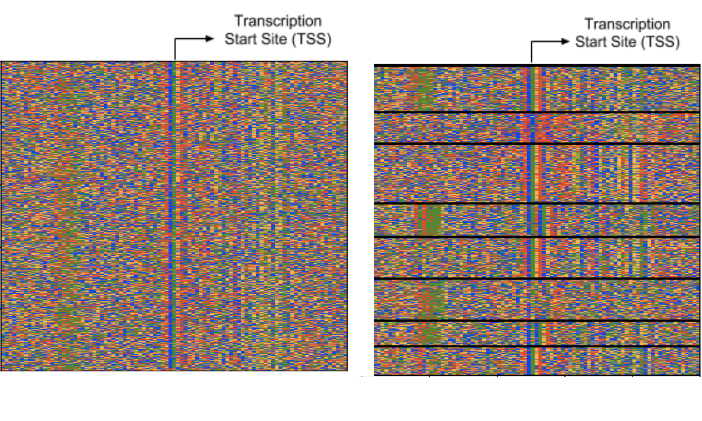

MINDFUL: A Method to Identify Novel and Diverse Signals with Fast, Unsupervised Learning

Mallika Parulekar, Leelavati Narlikar

Great Lakes Bioinformatics Conference (GLBIO), 2019.

Projects

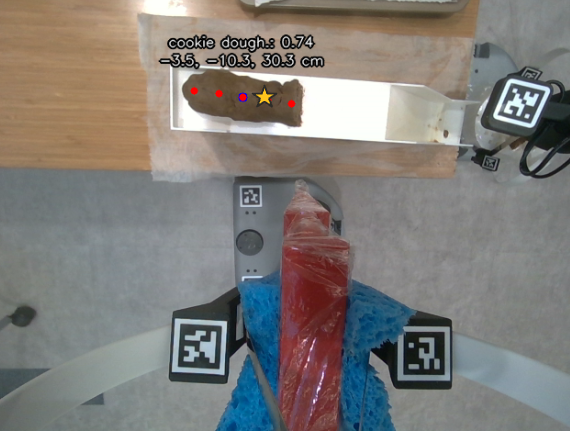

CookieBot: Automated Cookie Making with Dual Robot System

Aditya Dutt, Mallika Parulekar, Aaarya Sumuk, Harshvardhan Agarwal

Stanford Robotics Center, 2025.

Built and designed a 2 robot system to cut, handoff, and flatten cookie dough slices using 3D printed tools. Used Grounded Dino and Segment Anything (SAM) for vision.

Ultimate Vision: Vision-based Autoregressive Frisbee Tracking System

Mallika Parulekar, Yash Kankariya

Stanford CS 231N, 2025.

Created Ultimate Vision, a hybrid tracking system designed to localize the frisbee over multiple frames using a combination of zero-shot segmentation models and a custom trained ResNet with a regression head.